Spring 2018

While We Remain

The greatest threat that humanity faces from artificial intelligence is not killer robots, but rather, our lack of willingness to analyze, name, and live to the values we want society to have today.

April 2026

"Are you depressed again?" Voice sounds irritated. "It feels like you're depressed."

I'm rubbing my eyes, waking up. I think I want coffee.

"No coffee until you take your medication," says Voice, her EEG sensors reading my thoughts. "Your depression meds are in the bathroom."

Two red arrows fill my vision. Superimposed over my mixed reality contact lenses, they flash brighter and brighter, pointing towards the bathroom. My ears begin to ring and the volume increases until I put my feet on the bedroom floor. It's cold.

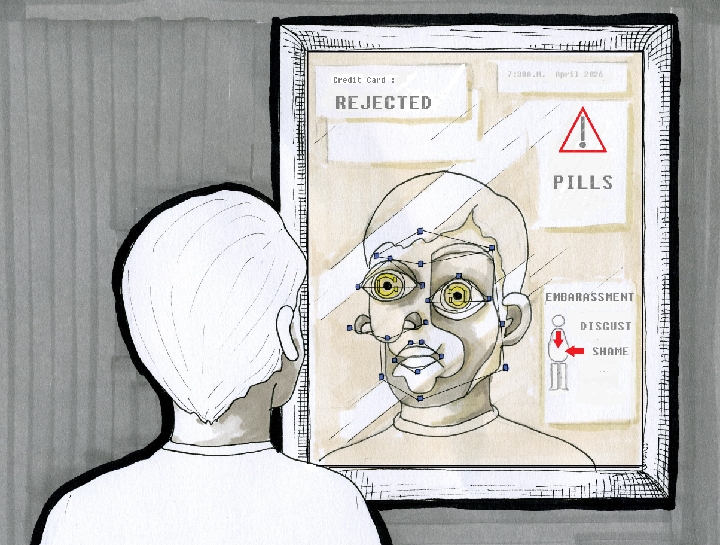

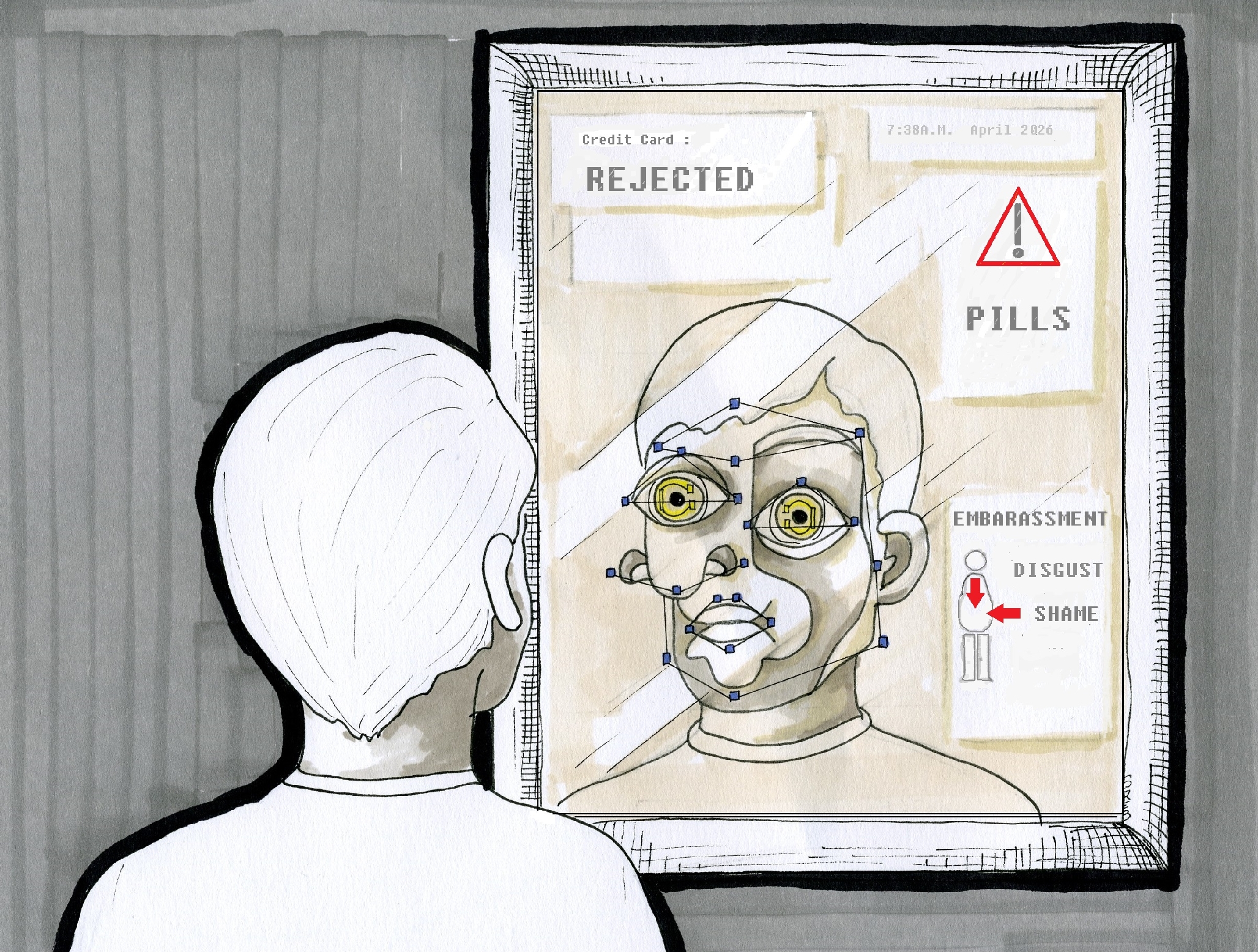

As I reach to open the medicine cabinet, my shirt rides up over my stomach. My gut pops out. I feel shame.

Voice weighs in. "You see people trying not to stare at it, don't you? Their eyes wander down to your gut and then pop back up to look you in the face."

I don't respond. I'm looking at Mirror, who has a facial recognition box placed around my head, projecting my emotions in real time. Large block letters appear next to the reflection of my stomach in the glass:

DISGUST SHAME EMBARASSMENT

Then the pulse comes. Like a shock from static electricity, only I feel a burst of energy inside my brain. My right arm spasms and my hand makes a claw. It hurts.

"Time for meds," says Voice. The red arrows come again, pointing to a series of pill bottles in the cabinet.

A news icon flashes from CNN on Mirror, the headline distracting me – "OKLAHOMA HIGH SCHOOL SOPHOMORE SLAIN WHILE DEFENDING CLASSMATES – Student Michael Davidson reportedly jumped in front of an irate teacher armed with a handgun…"

Another feed from CNN – "BOGOTA'S DAY ZERO BRINGS RIOTS FOR WATER – The series of water crises that began in the summer of 2018 continues, as does the unrest and mass migration that have resulted…"

The feeds are interrupted by a phone icon on Mirror and I instantly hear a voice in my earbuds:

"Mr. Turner." It's an AI. Not a person. I can tell by the inflection. "You are past due on payments for a number of your accounts."

While the voice continues, a series of credit card offers appear on Mirror. I click on one of them. A circular loading bar appears. It grinds for a moment, the AI voice from the collections agency also waiting for the results.

REJECTED

"Please hold," says Voice to the AI on the phone. I see the names of family members and some friends I haven't spoken to in months posted on Mirror. Numbers corresponding to their checking, savings, and 401K amounts are listed next to their names, but all the writing is greyed out and the word "BLOCKED" appears over their information.

"Blocked," repeats Voice to the AI on the phone.

The pulse comes again. Chip shocks my arm and the arrows appear over the bottles, stronger this time.

Voice is firm now. "Time for your meds."

I reach for the bottles. The noise of the pills shaking reminds me of a set of toy castanets I got for Christmas as a kid. I picture my mom's face smiling as I danced around the room to a Madonna song before I see the word "BLOCKED" next to her name.

I open the first bottle, put the pill in my mouth, and swallow. As I reach to cup water from the faucet, my arm bumps the bottle and all the pills spill out on the counter. Some of them bunch into a pile, others roll defiantly off the shelf. I reach to gather them to put them back in the bottle.

Then I stop.

I consider looking for a job or a date or a lay for the thousandth time. I think about calling a friend or someone in my family for help. Help I've asked for before. Help that has reached its limits.

Voice is silent. No pulses from Chip. Mirror is blank.

I open up the rest of my pill bottles. Twisting the tops makes me think of opening champagne.

I pour all the pills into a large pile and then scoop about thirty of them into my cupped hands.

The bitterness pinches my tongue, but I don't swallow for a minute. I'm waiting. Not sure for what.

Then I hear in my brain, "Why delay the inevitable?"

I can't tell if it's Voice or me.

***

April 2026

It's a woodpecker.

The sound – I couldn't place it at first.

"Peter – you still there?" My mom's voice comes over my headset.

"Sorry, mom," I say, sitting up in my patio chair. "I heard a woodpecker. I didn't realize they'd be around at this time of year."

"Yes, that's normal for them. It's probably a red-headed woodpecker."

I pause, looking up at the trees:

Squirrel. Something finch-like. Robin. Another squirrel.

Then I see the bright red head, hammering into a tree with aplomb.

"I hear him," mom says over the phone.

"Here, I'll show you." I raise my hand in the air, gesturing with my haptic interface to switch my camera mode from pointing at my retinas to the See What I See mode for mom.

"Oh, he's lovely. Your grandfather knew all the types of birds in upstate New York."

Her voice gets softer." And the trees – he could name all the trees..."

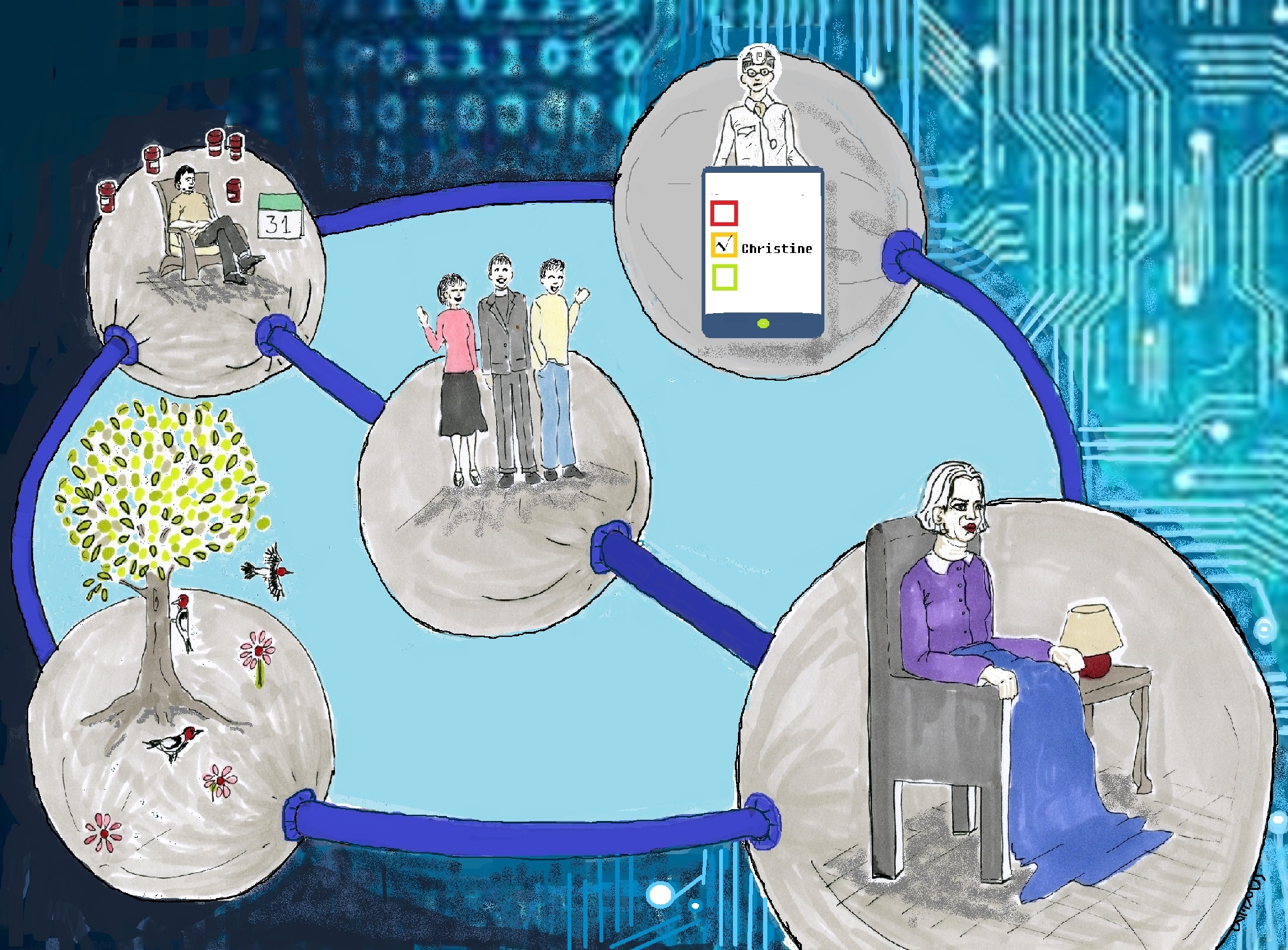

"You okay, mom?" I turn my system back on myself and make a few gestures in the air to bring up my mom's health information. Her emotional AI sensors show her as being "melancholy."

"I'm fine," she says quickly.

I make another gesture, swiping until I find an icon showing her medications. The icons for the pill bottles are darkened, indicating she hasn't taken them in two days.

I swipe some more, looking through her calendar. She had three entries for the past week: volunteering at church, lunch with a friend, therapy.

"Mom, why did you stop taking your meds?"

"Who says I didn't take them?" She sighs. "Oh, right. We agreed you could access that stuff from your eye-phone or whatever it's called."

"How did your appointments go last week?"

She pauses, hesitating. "I didn't go to any of them... I didn't want to. So I stayed home and binged on Netflix. I also got a bunch of books for free from some offer on Amazon."

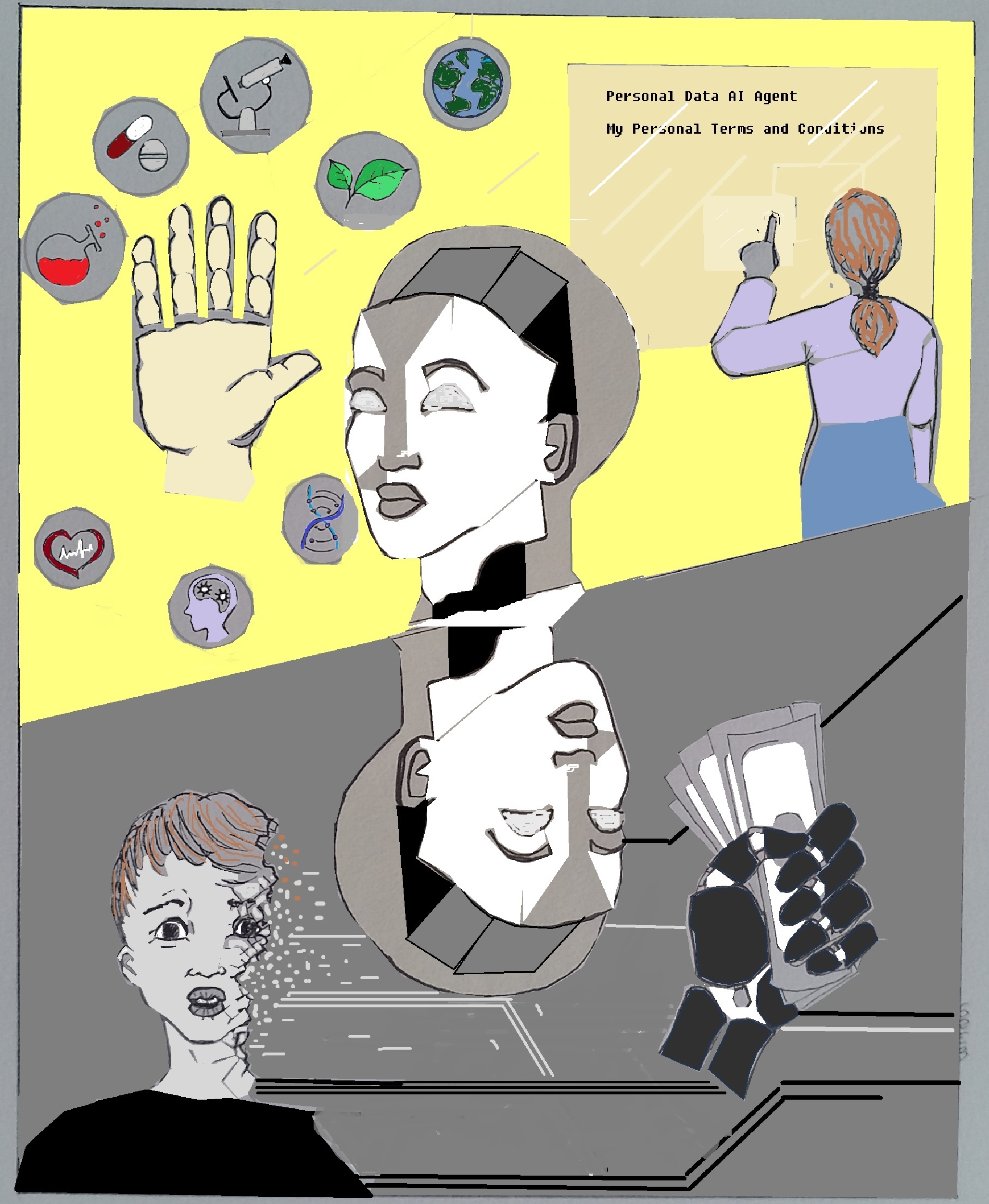

"Mom, we discussed this! Did you use your Personal Data AI Agent to help with the purchase?"

"I forget what that means."

"It means when you clicked on the offer, there should have been a pop-up with your name on it saying, 'ON MY TERMS,' remember? It's like an ad-blocker on steroids. We went through the ways you feel comfortable sharing your data, remember? So unless you pressed 'disable,' you probably wouldn't have gotten that free stuff."

"Oh," she says. "I did press 'disable.' I really wanted the books."

I curse under my breath. "So now are you getting ads on Facebook targeted to senior citizens with pictures of depressed old people? Or ads for vacations you can't afford with credit card offers as part of the deal?"

Another pause. The woodpecker starts up again.

"Maybe."

"Okay, mom, hold on." I move my eyes over an icon for my phone contacts and say the names of my brother, my mom's friend, and her pastor. As the phone lines begin ringing, I click a button marked "intervention" as they all begin picking up. When they are all on, I click to conference-call mode.

"Mom, everybody's on the phone now. Don't hang up."

"Hi, mom," my brother John says. "I'm coming over for lunch today."

Mom sounds embarrassed. "I don't need you all to interrupt my…"

Her friend Louise cuts her off: "Christine! If Peter says you need to see people, you need to see people. You agreed to do this with us. We love you, and that's that."

The pastor adds, "I'll be waiting after your lunch to talk about how we can use your help on Easter Sunday. Nobody calms kids hopped up on bunny chocolate like you can, Christine."

I hear a sniff. Mom is crying. "I'm just so embarrassed. I am so blessed to have you all. I don't know why I get this way."

"Mom," I say. "We love you. You're fine and there's nothing to be embarrassed about. We just need to stick together. You help us as much as we help you, you know."

"I know that's true," she chuckles.

"Well, we're all human, right?" I say. "And that's okay."

We all exchange goodbyes, I take off my headgear, and power down my technology.

And keep watching the birds.

***

Two scenarios, two radically different fictional – although possible – future interactions between human beings and various AI-enabled technologies. What they reflect is an issue that is very much of the present day: humanity itself is in play as these technologies advance. Society must make considered choices now to best shape the nature and value of our humanness in this new, algorithmic age.

Or not. While some posit that the growing discussions around AI ethics may hinder innovation, others recognize the myriad ways that design favoring the "move fast and break things" model de facto prioritizes market-driven incentives over human well-being. Turner exists in a society guided by our existing consumer-centric model, valuing people primarily by what they purchase. Like any technology, AI is not to blame here. It has been designed based on the values we deem most significant. In Turner's case, when he can't increase the bottom line, he's invited to take his leave.

Christine lives in a dramatically different reality that is based on the informed and genuine consent she utilizes with her support network to boost her physical and mental health. Algorithmic personalization and brand affinity haven't disappeared with control of data intact. Quite the opposite: AI functionality and value will be improved when we can correct errors about our information, and we'll be happy to provide more details about our lives when we're provided trusted channels to share, in ways we can actually administer.

So, how do we avoid a Turner-style future? It all comes down to reduction.

The Reality of Reduction

Today, market forces based on "invisible hand" philosophies define progress via productivity. But growth defined by speed alone risks prioritizing profit over purpose. Bobby Kennedy's "beyond GDP" speech pointed out fifty years ago that what society doesn't measure isn't valued. People have been trained to consider their data something like pocket change, giving it away without recognizing it as the core currency of their identity. (Perhaps the Cambridge Analytica scandal will prove useful in changing that).

This devaluation of consent is one of today's most ubiquitous examples of human reductionism, where our agency over access to personal data is removed, even as that data is shaping how our identity is manifested to the world. I first learned this term from Joi Ito's seminal article, "Resisting Reduction: A Manifesto," which warns of the risks that artificial intelligence poses for society if we allow it to make all of our decisions. He notes that many of the experts building AI systems believe our minds are essentially computers, or "wetware" – a notion of computationalism that ignores the possibility of a spiritual realm or the benefits of systems thinking that is inclusive of the surrounding environment.

In Turner's case, human reductionism meets the subject of AI personhood, or robot rights. He lives in a world where all electronic devices and systems are attributed the same legal rights as humans. This is a complex issue that recently garnered mainstream attention when Saudi Arabia bestowed citizenship on Sophia, the robot. While the legal notion of "personhood" as it applies to corporations has existed for decades, the introduction of a human-looking (or sounding) device into the mix distracts us from the pressing need to assign responsibility for actions taken by artificial intelligence (in any form – algorithmic, systems-level, or robotic). Should Voice or Mirror have been held accountable for pushing Turner over the edge? Possibly. But when humans are unjustly blamed in conjunction with nascent AI technologies, dangerous precedents become embedded in code.

Beyond actual legal or safety protections, the reductionist narrative may deepen to the point where people address all objects as individuals, as Turner does. Where the behavior is a faith-based or culturally-driven practice (as in the Shinto tradition, where objects are seen as endowed with a spirit harmonizing with its owner), this is an individual's choice to make. But a future in which robots are afforded the same rights as humans won't be equitable if they can access our data and analyze our behavior, as devices already do today.

Fortunately, the burgeoning field of robotic nudging provides a precedent for clear demarcation between assistance and manipulation in AI technologies. Whereas Turner succumbs to harmful, manipulative techniques based on existing behavioral and advertising practices extrapolated into the future, Christine benefits from a pre-approved, consent-based model of intervention. Whatever the possibility of AI becoming sentient, we won't trust it when it's designed to drive surveillance capitalism and not genuine connection.

AI and We

According to research from the realms of positive psychology, mental health, and sociology, we're built with a deep-rooted need to connect with other human beings. Where these connections are blocked or hindered, our mental, emotional, and spiritual well-being is diminished. The greater the extent of this diminishment, the greater our sense of isolation, increasing our individual and collective suffering.

And while modernity has brought an end to a number of afflictions, depression – often caused by a lack of connection – isn't one of them. According to the World Health Organization, depression is the leading cause of ill health worldwide. "More than 300 million people are now living with depression, an increase of more than 18% between 2005 and 2015," the WHO reports, also noting that suicide is the second leading cause of death among 15-to-29-year-olds globally.

Artificial intelligence is not a direct cause of depression, but society should take a hard look at the risk that applications of these technologies could worsen the trend. Where AI-fueled automation accelerates job loss, for example, the shame that follows will increase human isolation. While Turner alienated himself, his AI-dominated reality was far from a substitute for the human contact that may well have saved Christine.

To be fair, we don't know how humans will deal with depression once AI improves to the point that it could substitute for human companionship. For senior citizens or anyone isolated from personal contact, I welcome the possibility of benefiting from feelings of computerized connectivity versus suffering alone. Numerous organizations are already working to harness the benefits of affective computing, and virtual, AI-enabled therapy may help soldiers better recover from PTSD. But while AI systems may be able to help some people in specific situations, today it's a fact that all people need, and benefit from, human interaction.

Finding Our Values

The greatest threat that humanity faces from artificial intelligence is not killer robots, but rather, our lack of willingness to analyze, name, and live to the values we want society to have today. Reductionism denies not only the specific values that individuals hold, but erodes humanity's ability to identify and build upon them in aggregate for our collective future.

Our primary challenge today is determining what a human is worth. If we continue to prioritize shareholder-maximized growth, we need to acknowledge the reality that there is no business imperative to keep humans in jobs once their skills and attributes can be replaced by machines – and like Turner, once people can't work and consume, they're of no value to society at all.

Genuine prosperity means prioritizing people and planet at the same level as financial profit. Human sustainability relies on the equal distribution of AI's benefits in a world of transparency and consent. We'll all be in Christine's shoes eventually – older, reliant on the support of others, and in a world with much more AI in our daily lives. So, before algorithms make all our decisions – while we remain – the question we have to ask ourselves is:

How will machines know what we value if we don't know ourselves?

***

John C. Havens (@johnchavens) is executive director of The IEEE Global Initiative on Ethics of Autonomous and Intelligent Systems and the author of Heartificial Intelligence: Embracing Our Humanity to Maximize Machines.